Public LLM benchmarks misled me on Opus 4.7

A friend asked me last week how Opus 4.7 was holding up on GrandpaCAD. I dismissed him. Here's the data I dismissed him with:

- OpenRouter throughput. Gemini 3.1 sits around 62 tokens/second p50. Opus (when I last checked) was around 45.

- Design Arena 3D Benchmark. Kimi K2.6 has 1369 ELO, Gemini 3.1 has 1320, Opus 4.5 has 1299.

- Token prices. Opus tokens cost roughly 3x more than Gemini, 10x more than Kimi.

Three independent sources, all pointing the same way: faster, better, cheaper somewhere else. To be fair, I'd been dismissing Claude updates for a while. Sonnet 4.5 lost to Gemini on this exact workload last year, and I never even bothered running an Opus model because the per-token price scared me off before the eval did. Claude Code also feels sluggish in my day-to-day work, which only reinforced the story. So why bother running the eval?

I ran it anyway. The numbers came back upside down.

What the eval suite actually showed

I ran my standard eval harness against four frontier models: Opus 4.7 on auto thinking, Gemini 3.1 on medium thinking budget, GPT 5.5 with service_tier: priority, and Kimi K2.6 on Baseten (the fastest provider I could find for it).

| Metric | Opus 4.7 | Gemini 3.1 | GPT 5.5 | Kimi K2.6 |

|---|

| Weighted score | 0.587 | 0.556 | 0.501 | 0.545 |

| Adherence | 0.584 | 0.614 | 0.591 | 0.481 |

| Pass rate | 85.7% | 76.2% | 90.5% | 66.7% |

| Error rate | 9.5% | 0.0% | 0.0% | 14.3% |

| Code retries (avg) | 0.19 | 0.24 | 0.10 | 0.52 |

| Avg duration | 0m 32s | 1m 32s | 1m 46s | 0m 53s |

| Avg cost | $0.10 | $0.21 | $0.94 | $0.02 |

| Total benchmark cost | $2.04 | $4.48 | $19.79 | $0.51 |

A few things stand out.

Opus 4.7 is the fastest. 32 seconds per generation. Gemini 3.1 takes 1m 32s. GPT 5.5 takes 1m 46s. The OpenRouter throughput chart says Gemini is faster on tokens-per-second, and that's true if you measure tokens. But thinking models burn tokens you don't see, and what actually matters is wall-clock time on your prompt. Opus thinks less, ships sooner. I can run three Opus generations in the time Gemini finishes one.

Opus 4.7 has the highest weighted score. 0.587, ahead of Gemini 3.1 (0.556) and GPT 5.5 (0.501).

Opus 4.7 is half the cost of Gemini, one tenth the cost of GPT 5.5. $0.10 per generation versus $0.21 versus $0.94. Token-price comparisons don't account for thinking budgets or how many tokens each model actually spends. Per finished 3D model, Opus is the cheap one.

Why 3D is the highest-signal LLM benchmark you can run

This is the part I keep coming back to. If you read text from GPT 5.5, Opus 4.7, and Gemini 3.1 side by side, you genuinely cannot tell which one is smarter. They all sound competent, they all hold the thread, and the differences hide in places you won't notice for months: subtle factual drift, quiet bias, reasoning that looks right and doesn't quite hold under load.

Code is a step better. Code that doesn't compile, doesn't compile. You catch logic errors at the unit-test boundary. But there are still bugs that hide for months because they only fire on specific inputs.

3D is different. The hook either connects to the wall plate or it doesn't. The phone stand either holds the phone or it tips over. The chair legs either touch the ground or they float. Your eyes catch broken 3D in 200 milliseconds, the same way you spot a typo in your own name. There's no abstract intermediate layer where bugs can hide. Pattern recognition for physical objects is the oldest module in your visual cortex, and it doesn't miss.

That's why public 3D ELO charts diverge so hard from real-world 3D performance. Voting on a side-by-side render is not the same as actually generating a printable model from a real user prompt and watching it succeed or fail. The gap is huge.

I think GrandpaCAD's eval suite, by accident, ended up being one of the highest-signal benchmarks for frontier model reasoning that exists. Not because I'm clever, but because 3D is unforgiving in a way text and code are not.

The Kimi K2.6 trap

Kimi K2.6 is the most interesting model in this comparison. It looked unbeatable on paper:

- Highest 3D ELO on Design Arena (1369, ahead of Gemini and Opus)

- Cheapest in my benchmark ($0.02 per generation, one fifth of Opus)

- Fastest inference on the right provider (Baseten clocks it at up to 120 tokens/second, double the typical rate)

I went into this thinking Kimi might just walk away with it. A third the price, double the speed, and a higher 3D ELO than the model I was testing it against.

It didn't. Kimi K2.6 had the lowest adherence (0.481), the highest error rate (14.3%), and the lowest pass rate (66.7%). It also needed nearly triple the code retries that Opus needed.

So: the public 3D ELO put Kimi at the top, the provider gave it the fastest throughput in the test, and the per-token price was unbeatable. Three separate signals saying winner. The actual generations were the worst of the four.

ELO measures preference between two static renders. It does not measure whether a model can take a real user prompt, write OpenSCAD or Python code that doesn't crash on the first try, and produce a printable result. Different problem entirely.

GPT 5.5 priority is more accurate. It's also too slow to ship.

GPT 5.5 with priority service tier had the highest pass rate (90.5%) and the lowest code-error rate (0.10 retries on average). It is genuinely a strong model.

It is also slow. Average generation took 1m 46s, 3.3x slower than Opus 4.7. And the way GrandpaCAD users actually work is iterative: prompt, eyeball the result, tweak, prompt again. Speed is the experience. I'd rather give a user three quick attempts at the model they want than make them wait for one slower polished one. The pass-rate gap is real (90.5% vs 85.7%) but it's less than five percentage points, and three Opus generations almost always beat one GPT 5.5 generation in practice.

The cost story is secondary but striking once you put the numbers next to each other. Priority tier raises the API price by 2.5x, and the average generation cost on this workload was $0.94 versus Opus's $0.10.

For the same benchmark, GPT 5.5 cost me $19.79. Opus cost me $2.04.

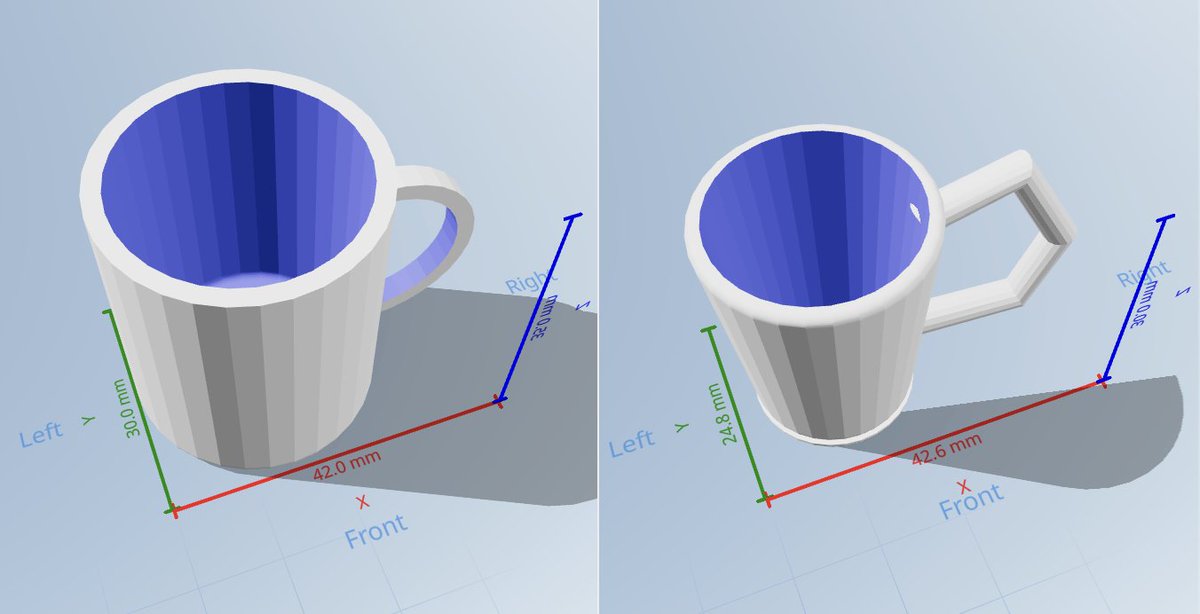

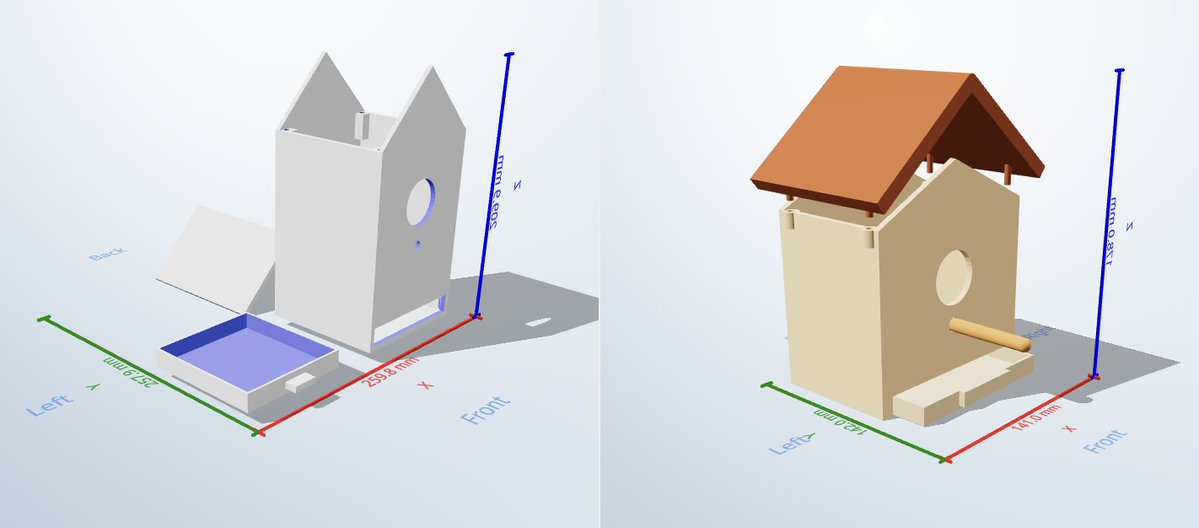

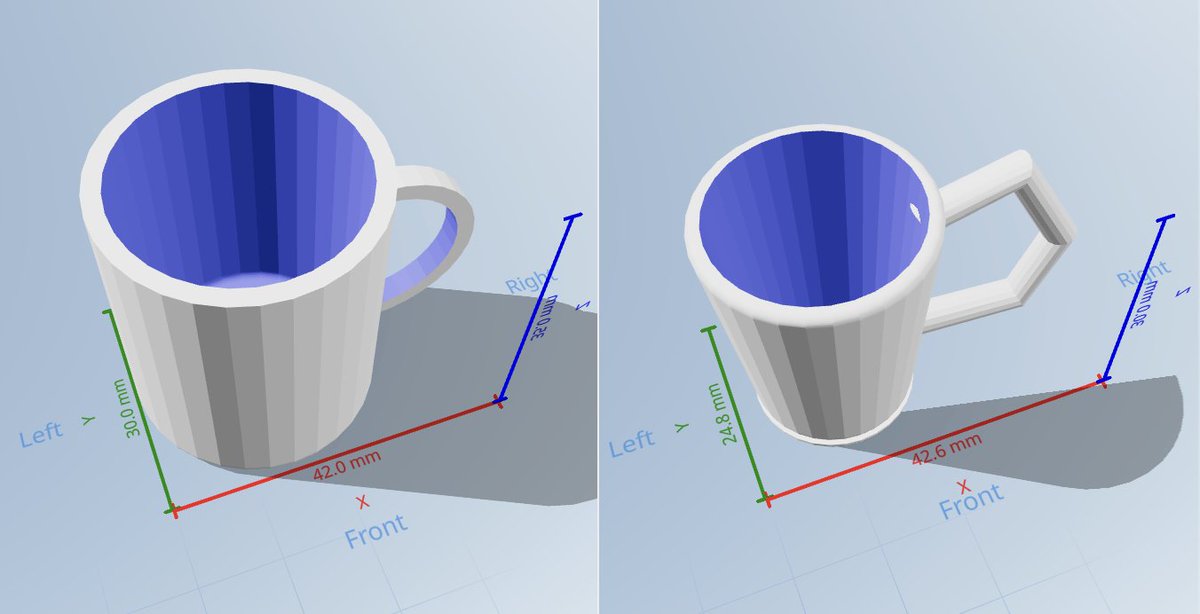

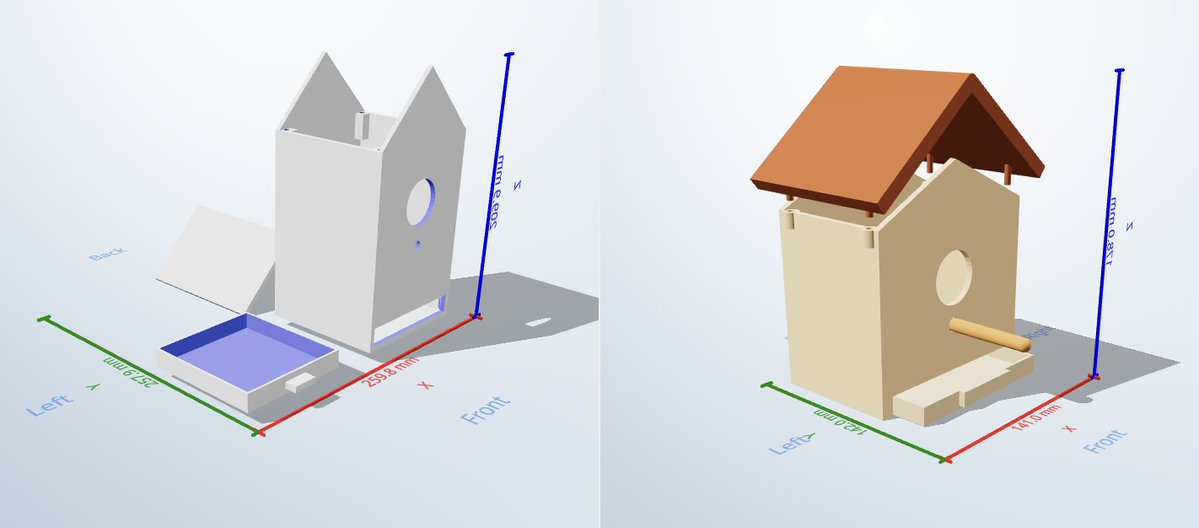

I posted a side-by-side comparison of Opus 4.7 and GPT 5.5 on the same prompt if you want to eyeball the visual difference.

Why I don't trust public benchmarks anymore

Two reasons.

First, the 3D ELO benchmarks measure the wrong thing. Side-by-side preference voting on render quality is not the same as end-to-end "did this prompt produce a useful printable model." Kimi was the canary on this one. It topped the chart and finished last on real work.

Second, labs tend to publish their own benchmarks. Cursor's Composer 2 benchmark post (March 2026) is a recent example. Read it and decide for yourself how much weight to put on numbers a lab publishes about its own model. The pattern is general: if a number is published by the entity that benefits from the number being high, treat it as marketing until proven otherwise.

The only benchmark I trust now is the one I run on my own workload, against my own prompts, under my own eval harness. Everything else is decoration.

Decide for your use case. Then test for your use case.

If you're shipping a 3D code-generation product, test on real 3D code generation. If you're shipping legal summarization, test on legal summarization. Your benchmark almost certainly disagrees with the public benchmarks for the same models, because public benchmarks measure averages across tasks that aren't yours.

For my workload (text-to-3D, OpenSCAD and Blender, real user prompts), Opus 4.7 wins on speed, cost, and weighted score. GrandpaCAD now defaults to Opus 4.7.

If you want the raw data, the /evals page has every run logged, including the ones that broke. The methodology is in how we test the 3D modelling agent. The previous benchmark, where Gemini 3 won, is in comparing state-of-the-art LLMs for 3D generation. The leaderboard moves fast.